System

Vocal2Piano is a robotic exoskeleton that clamps onto any acoustic piano without modification and plays a real-time harmonic accompaniment in response to live audio input or an uploaded song. Three software layers handle the signal path from microphone to key strike: Vocal2MIDI converts audio to MIDI events, MIDI2Chords reasons about harmony and generates chord targets, and MIDI2Piano translates those targets into stepper and solenoid commands for the Teensy 4.1.

Two independently sliding boards each carry 15 solenoids spaced at piano key intervals. NEMA17 steppers on GT2 belt drives reposition each board along the key span so that any 15-note window is reachable — effectively covering the full range of a 30-note accompaniment from a fixed 30-solenoid count.

End-to-end system architecture

Pitch detection — Vocal2MIDI

Vocal2MIDI converts audio into a stream of MIDI note events. It runs two separate pipelines depending on the input source.

Instrument mode runs aubio's yinfft detector on every 5.8 ms frame — fast enough to track staccato piano or guitar with under 10 ms latency. Voice mode accumulates a 200 ms window and runs probabilistic YIN (pYIN), which uses a hidden Markov model to resolve octave ambiguity. Chord mode computes a Constant-Q Transform over 88 piano-key-aligned frequency bins, finds up to six simultaneous energy peaks with prominence filtering, and suppresses octave duplicates — an alternative Max/MSP-native implementation using sigmund~ provides lower latency by bypassing Python entirely.

All three live modes run CREPE — a CNN trained on large-scale pitched audio — as a first-pass detector, falling back to the classical algorithms when confidence is low. An adaptive noise gate calibrates to the ambient RMS over the first 500 ms. The web UI connects over WebSocket and uses the Python results directly; if the process is not running it falls back to a browser-side YIN implementation with a stability gate and dynamic noise floor tracking.

For uploaded files, the pipeline classifies the source and routes accordingly: voice recordings go through pYIN for melody extraction, instrumental tracks go directly to Basic Pitch (ONNX) for polyphonic transcription, and mixed recordings are first separated by Demucs into vocal and accompaniment stems before transcription. Pipeline step events stream to the UI in real time via SSE, and mixed files produce four output tracks.

Live mode — real-time pitch detection and piano key display

File mode — source classification, transcription pipeline, and track output

Musical reasoning — MIDI2Chords

MIDI2Chords sits between the MIDI stream from Vocal2MIDI and the hardware driver. Given a melody note, it decides what chord the piano should play — tracking the musical key, selecting the next chord from a learned progression model, and splitting the voicing across the two physical boards.

Key tracking

Every incoming note increments its pitch class in a 12-element histogram that decays 2% per note, weighting recent notes more heavily. On every eighth note the system runs the Krumhansl–Kessler algorithm, computing the Pearson correlation between the current histogram and empirical major and minor key profiles for all 24 keys. The key estimate stabilises after about four bars and tracks modulations within a few notes of the change.

Chord sequencer

Given the current key, the chord is selected from a Markov chain over Roman numerals (I ii iii IV V vi vii). Candidate transitions are biased toward chords that contain the current melody note — a 2.5× weight boost — which reduces dissonance without hard rules about harmonic function. The chain advances once per bar so the accompaniment changes in sync with the musical phrase rather than on every note event.

Transition weights ship as hand-tuned estimates reflecting common I–IV–V–vi patterns. A training script can replace them with corpus-learned values from any folder of MIDI files — when learned_transitions.json is present it loads automatically on startup.

Markov transition graph — major key. Arrow weight = transition probability.

Voicing and rail planning

The chord root and type are converted to specific MIDI notes by the voicing engine: the left board plays the root in octave 3 (bass), the right board covers the chord tones in octave 4. The system then solves for the optimal rail position for each board — trying all offsets from −12 to +24 semitones and maximising note coverage across both boards jointly, with a small penalty for large movements. The resulting positions and a 15-bit solenoid mask are sent to the Teensy as MOVE and FIRE commands over USB serial.

The Max/MSP patch receives the chord state from MIDI2Chords via a dedicated MIDI port (Voice2Piano_Harmony), displaying the active chord root, type, key, and Roman numeral degree in real time. A serial_driver.js object in the patch can also bypass MIDI2Piano.py entirely and send MOVE/FIRE commands directly to the Teensy via the serial object.

Left board — bass register, rail sliding between positions

Right board — chord tones, rail repositioning

Hardware

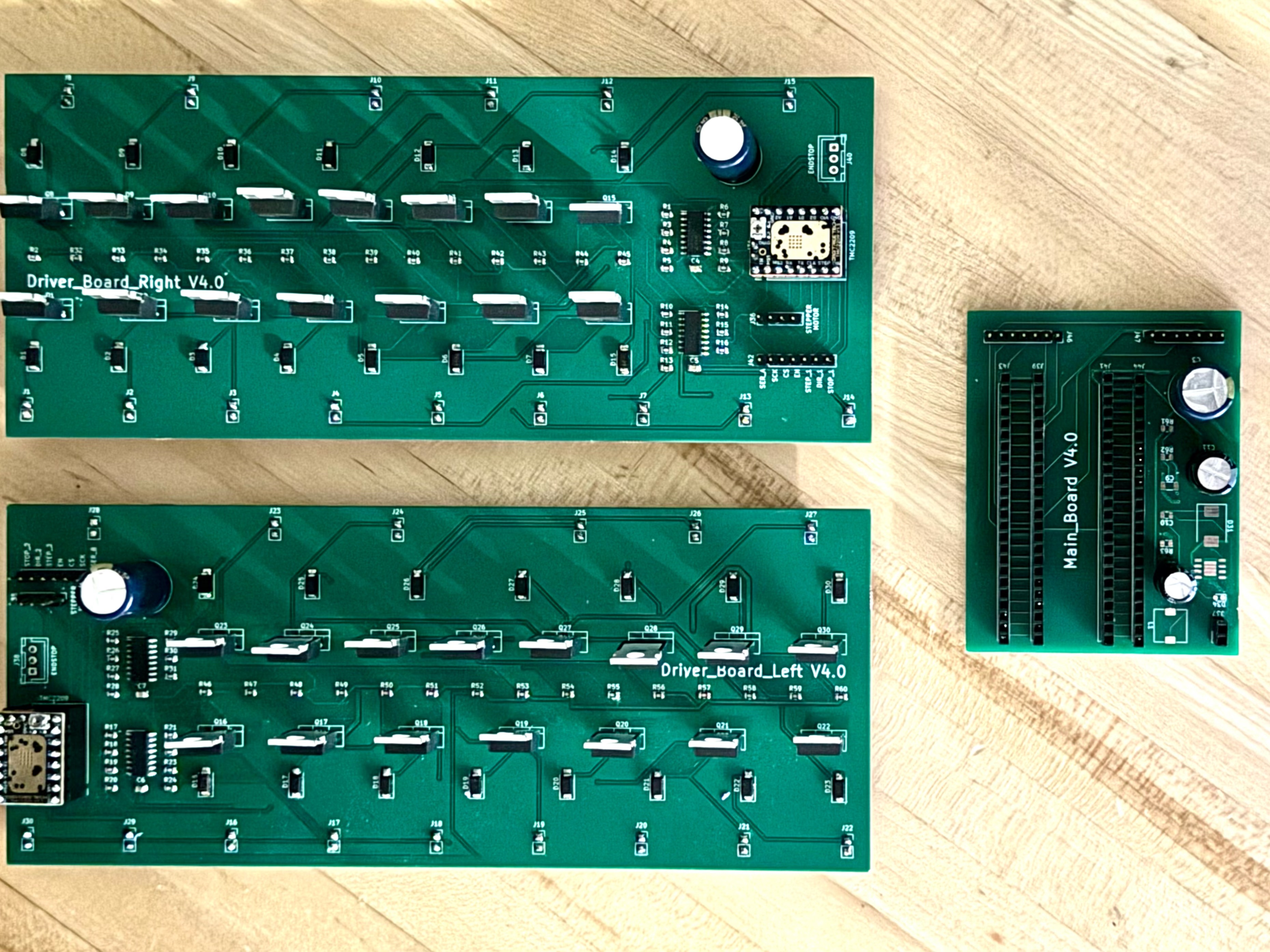

PCB

Each of the two driver boards carries 15 IRLZ34N logic-level MOSFETs, one per solenoid, driven through 100 Ω gate resistors by two daisy-chained SN74HC595 shift registers — reducing the control interface to three lines (data, clock, latch) regardless of channel count. An SS14 Schottky diode on each channel handles flyback suppression. A 1000 µF bulk capacitor on the 12 V rail absorbs inrush when multiple solenoids fire simultaneously. The Teensy clocks both boards together so both 15-channel chains latch in a single atomic pulse.

Driver board 3D render

Assembled driver board